Text cleaner remove punctuation3/24/2023

We will take a small example and understand the difference from nltk.stem import PorterStemmer from nltk.tokenize import word_tokenize ps = PorterStemmer() # choose some words to be stemmed words = for w in words: print(w, “ : “, ps.stem(w)) #output connect : connect connected : connect connection : connect connecting : connect connects : connect #Lemma from nltk.stem import WordNetLemmatizer lemmatizer = WordNetLemmatizer() print("studies:", lemmatizer.lemmatize("studies")) print("corpora :", lemmatizer.lemmatize("corpora")) print("better :", lemmatizer.lemmatize("better", pos ="a")) #output studies: study corpora : corpus better : good Refer the below link for better understanding It observes position and Parts of speech of a word before striping anything It usually refers doing things properly with the use of a vocabulary and morphological analysis of words. In Lemmatization root word is called Lemma. “Lemmatization, unlike Stemming, reduces the inflected words properly ensuring that the root word belongs to the language. Stemming is a rule based approach, it strips inflected words based on common prefixes and suffixes.įor example: Common suffix like: “es”, “ing”, “pre” etc. “Stemming is the process of reducing inflection in words to their root forms such as mapping a group of words to the same stem even if the stem itself is not a valid word in the Language.” so we will see what happens with this and.” stop_words = set(stopwords.words(‘english’)) word_tokens = word_tokenize(input_text) output_text = output = for w in word_tokens: if w not in stop_words: output.append(w) print(word_tokens) print(output) #Printing Word Tokens and output(stop words removed)#

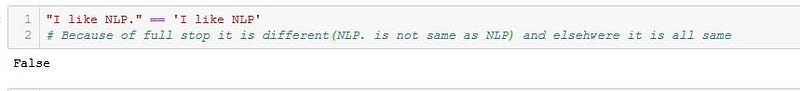

from rpus import stopwords from nltk.tokenize import word_tokenize input_text = “I am passing the input sentence here. You can use the following template to remove stop words from your text. Nltk (natural language tool kit) offers functions like tokenize and stopwords. For example, if you see the below example we can see the stopwords are removed. They can safely be ignored without sacrificing the meaning of the sentence. Stopwords are the words which does not add much meaning to a sentence. Step 1 and 2 are compiled into a function which is a template for basic text cleaning.You can use the following template based on your purpose of cleaning.Ĭode: input_text='''Iam ', ' ', text) #To remove the punctuations text = anslate(str.maketrans(' ',' ',string.punctuation)) #will consider only alphabets and numerics text = re.sub('',' ',text) #will replace newline with space text = re.sub("\n"," ",text) #will convert to lower case text = text.lower() # will split and join the words text=' '.join(text.split()) return text #Running the Funtion new_text=clean_text(input_text) #Output: iam cleaning the text including 123 Stemming vs Lemmatization(which one to choose?).Model Accuracy depends on how well the text is cleaned before training any model.There are many datasets that can be accessed from the link below:ĭata Preprocessing must include the follows: Model building(Of course the interesting part!!).Data Preprocessing(Very Important Step).The End to End process to build any product using NLP is as follows: Alternately it is also called Text Cleaning. So consider the text contains different symbols and words which doesn't convey meaning to the model while training.So we will remove them before feeding to the model in an efficient way.This method is called Data Preprocessing. The text is the main input for any type of model like classification, Q

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed